- #INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS DRIVERS#

- #INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS UPDATE#

- #INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS CODE#

The Namenode is the master node which persist metadata in HDFS and the datanode is the slave node which store the data.

You can check the details about the docker image here: fjardim Namenode and datanodes (HDFS) hive-metastore-postgresql (wittline/spark-worker:3.0.0).

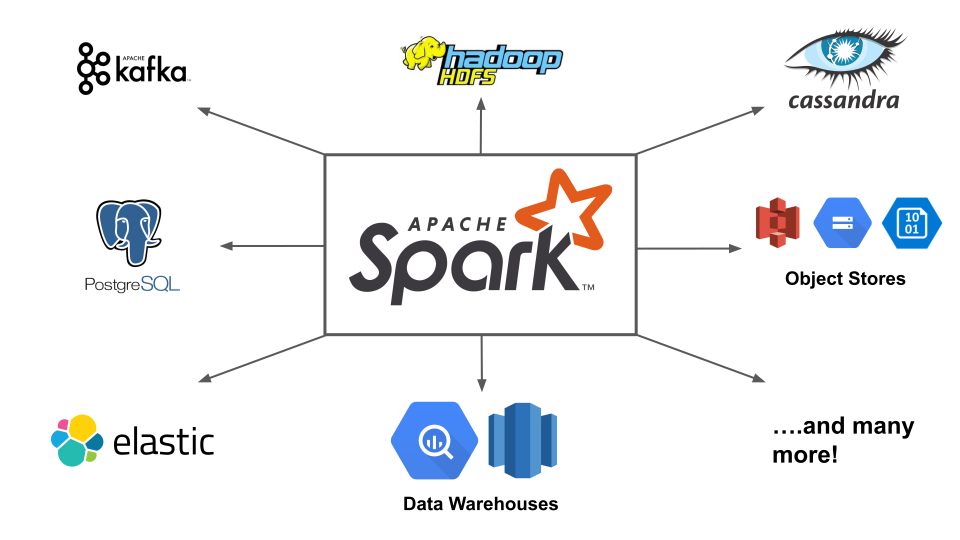

Hive data warehouse facilitates reading, writing, and managing large datasets on HDFS storage using SQL For example: A global temporary view is visible accross multiple SparkSessions, in this case we can conbine data from different SparkSessions that do not share the same hive metastore. Apache Spark does not need Hive, the idea of adding hive to this architecture is to have a metadata storage about tables and views that can be reused in a workload and thus avoid the use of re-creating queries for these tables. Apache Spark by default uses the Apache Hive metastore, located at user/hive/warehouse, to persist all the metadata about the tables created. You can check the details about the docker image here: wittline HiveĪpache Spark manages all the complexities of create and manage global and session-scoped views and SQL managed and unmanaged tables, in memory and disk, and SparkSQL is one the main components of Apache Spark, integrating relational procesing with spark functional programming.

#INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS CODE#

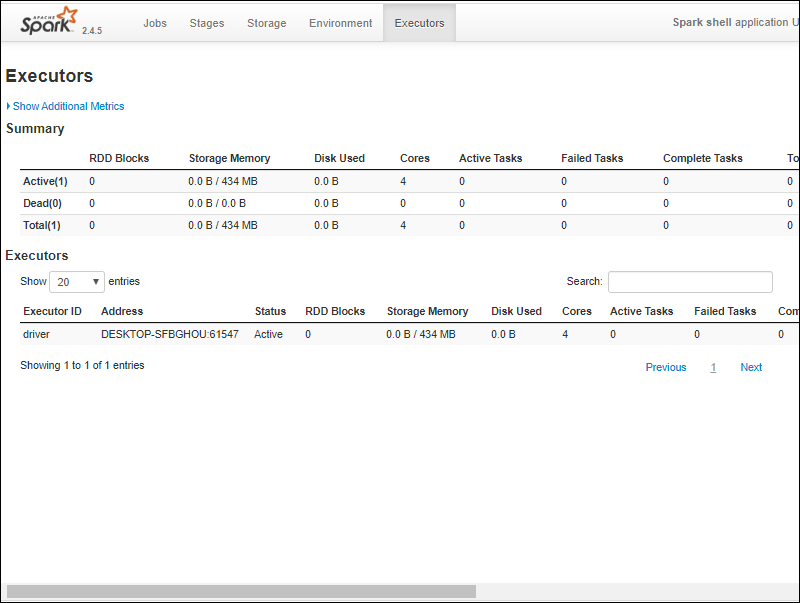

The pyspark code will be written using jupyter notebooks, we will submit the code to the standalone cluster using the SparkSession The table above shows that one way to recognize a standalone configuration is by observing who is the master node, a standalone configuration can only run applications for apache spark and submit spark applications directly to the master node.

#INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS DRIVERS#

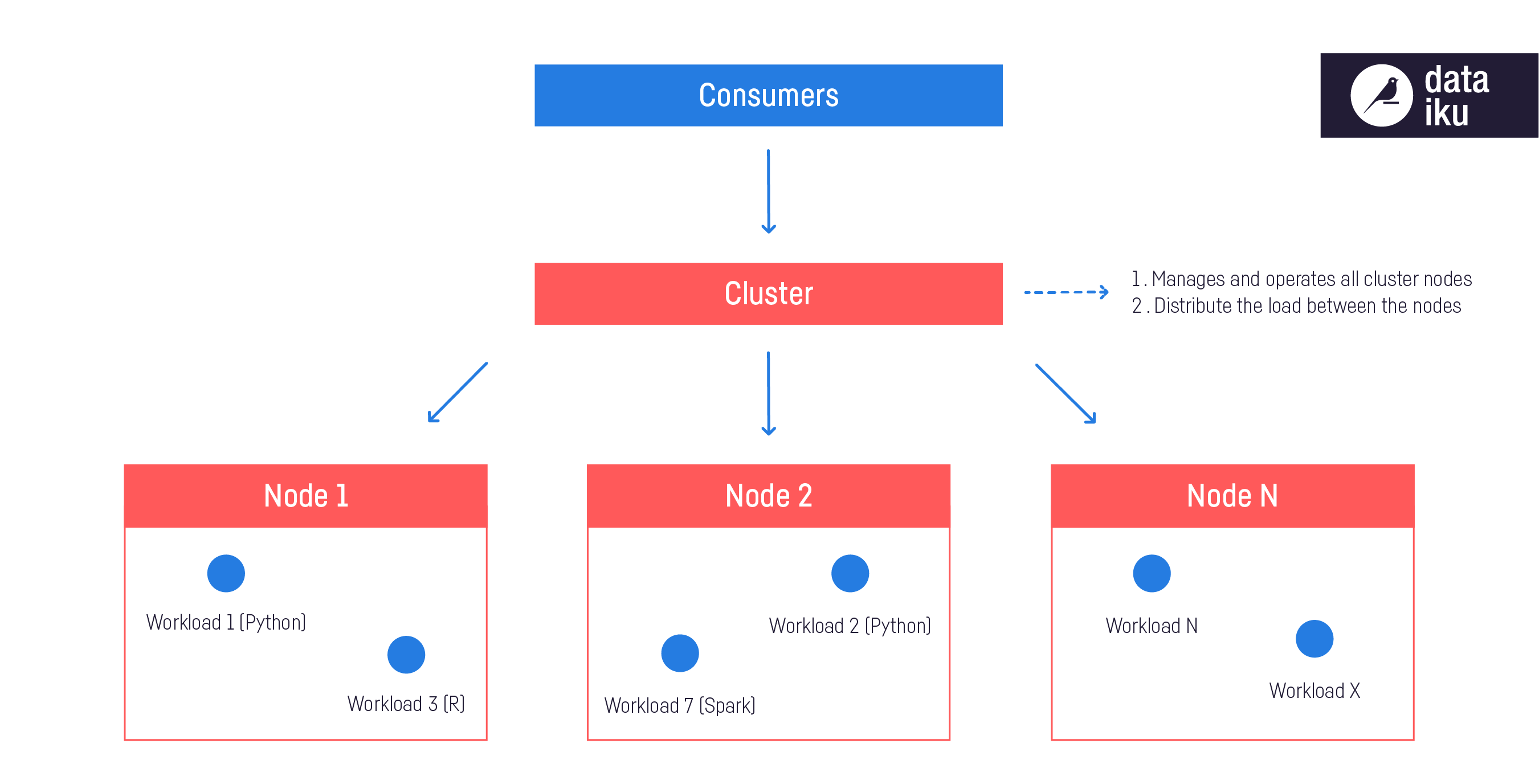

There are different ways to run an Apache Spark application: Local Mode, Standalone mode, Yarn, Kubernetes and Mesos, these are the way how Apache spark assigns resources to its drivers and executors, the last three mentioned are cluster managers, everything is based on who is the master node, let's see the table below: You can check the details about the docker image here: wittline What is a standalone cluster?

#INSTALL APACHE SPARK DOCKER IMAGE IN WINDOWS UPDATE#

forPath ( "/tmp/delta-table" ) // Update every even value by adding 100 to it deltaTable. Import io.delta.tables.* import .functions import DeltaTable deltaTable = DeltaTable.